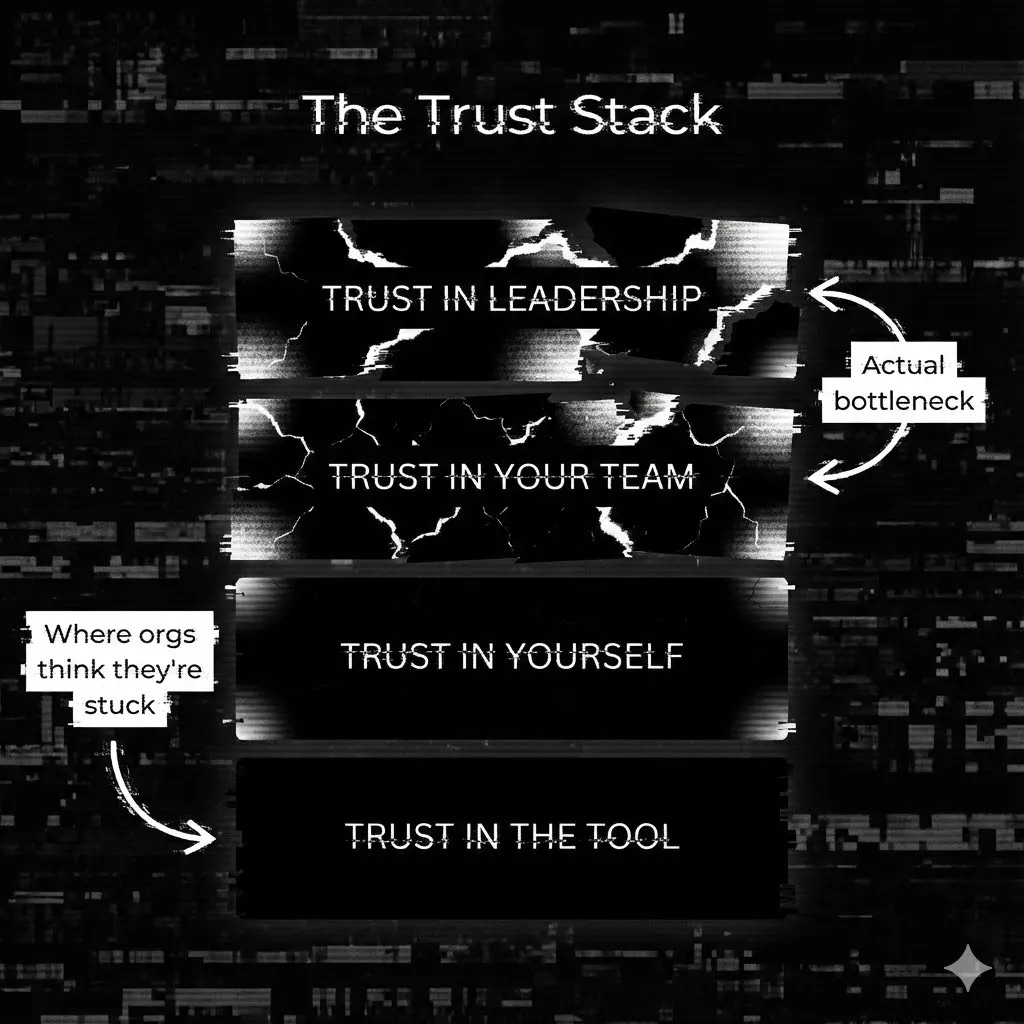

The Trust Stack

Or, Why AI Adoption Breaks Down Before It Starts

This piece builds on and follows the Vibe Marketing Manifesto, which I wrote for MarTech.org and cross-published here.

Two conversations since the new year. Different companies, different roles.

Both about AI. Both hit the same wall.

Trust.

A CEO described his real blocker: getting his team to trust themselves. They have the tools. They have permission. What they didn’t have was confidence in their own judgment when the output was “probably right” instead of “definitely right.”

A consultant I know took a different path. He vibe-coded a SaaS platform from his domain expertise—no engineering team, no fundraise, just decades of pattern recognition and AI as the accelerant. He’s now selling it. Same tools available to everyone. Different relationship with trust.

One organization is stuck. One individual shipped.

The difference isn’t technology. It’s trust operating at different layers.

The Old Trust Model

In the 5 Dysfunctions of a Team, Patrick Lencioni nailed it years ago: trust is the foundation. Without it, teams won’t engage in conflict, commit to decisions, hold each other accountable, or focus on results.

But Lencioni’s model assumed a deterministic world.

You ship a feature. It works or it doesn’t. The outcome is binary. Trust means believing your teammates will do what they said they’d do.

AI broke that model.

The Probabilistic Shift

We spent 30 years training managers to eliminate variance.

Six Sigma. Process optimization. Playbooks. Best practices.

The goal was predictability. Reduce risk by reducing variability.

Now the tools that matter most are built on variance. LLMs don’t give you the answer. They give you an answer—one that’s probably right, sometimes wrong, and never exactly the same twice.

This isn’t a bug. It’s the architecture.

And it requires a completely different relationship with trust.

The Trust Stack

I’ve started thinking about this as a stack. Four layers that have to be intact before AI adoption can actually work.

This isn’t a formal model. It’s a diagnostic lens; a way to figure out where you’re actually stuck instead of where you think you’re stuck.

Layer 1: Trust in the tool

Does this actually work? Is the output reliable enough to act on?

Most organizations think they’re stuck here. They’re not.

Layer 2: Trust in yourself

Can I evaluate this output? Do I have the judgment to know when 90% is good enough and when it’s not?

This is where the CEO’s team was stuck. They have the skills. They have domain expertise. But they’d been trained to defer to process, not to trust their own pattern recognition.

The consultant who shipped? He trusted his domain expertise to evaluate outputs. He didn’t wait for permission or process. He built, tested, and iterated.

AI asks something different of people: trust your judgment, ship, and iterate. For professionals who built careers on being right the first time, that’s an identity-level shift.

Layer 3: Trust in your team

Will my colleagues use good judgment? Can I ship something knowing someone else might iterate on it without me?

Layer 4: Trust in leadership

Will my manager back my experiments? If I ship something that’s 90% right and iterate in public, will I be rewarded for speed or punished for imperfection?

This is where organizations stall. Management wants certainty before approval. Talent wants air cover before experimenting. Teams want process before shipping.

Everyone waits for someone else to go first.

The deterministic model is too rigid for AI speed. But nobody has permission to abandon it.

The consultant didn’t have this problem. He was the team, the management, and the leadership. The entire trust stack resolved to one person—so he shipped.

The Real Bottleneck

Here’s the pattern:

Companies stuck on AI adoption almost always diagnose it as Layer 1. “We need better tools.” “The AI isn’t reliable enough.” “We’re waiting for the technology to mature.”

But when I dig in, the failure is almost always Layer 2, 3, or 4.

The CEO doesn’t have a technology problem. In fact, he’s got so much technology it’s nearly overwhelming. But he does have people who didn’t trust their own expertise to evaluate probabilistic outputs.

The consultant didn’t have superior technology. All he had was Claude Code and a hosting platform! He had a compressed trust stack and the willingness to ship before certainty.

Both had access to the same tools. One organization accumulated trust debt. One individual shipped a product.

This isn’t just an operational problem. It’s a revenue problem.

Every week spent waiting for certainty is a week your competitors are shipping, learning, and compounding advantage. The trust gap shows up in pipeline. It shows up in win rates. It shows up in the board meeting when someone asks why AI investment hasn’t moved the number.

What This Means

If your AI initiatives are stuck, stop auditing tools. Start auditing trust.

Ask:

Do individual contributors trust their own judgment to evaluate AI outputs?

Do teams trust each other enough to ship imperfect work and iterate?

Does leadership reward speed and learning, or punish variance?

The fix isn’t a 6-month trust-building initiative. It’s smaller than that.

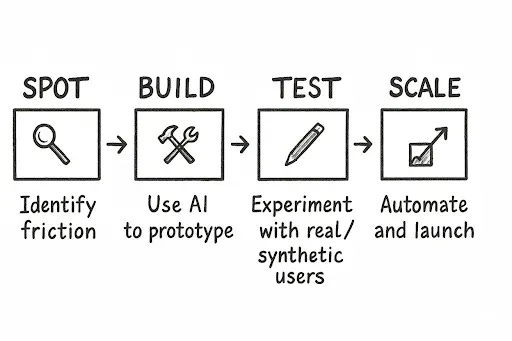

It’s what I identified in the original manifesto as a high-level process: Spot>Build>Test>Scale.

The companies shipping AI outcomes aren’t the ones with the best models or tools.

They’re the ones who rebuilt trust from the foundation up.

They learned to operate in a world where “probably right” is the new “done.”

Your playbook is broken. Here’s what comes next.